Difference between revisions of "SLab:Systems"

(→persephone cluster) |

|||

| Line 23: | Line 23: | ||

== persephone cluster == | == persephone cluster == | ||

| − | The persephone cluster | + | The persephone cluster is a member of the central [[BX:SGE|BX SGE]] installation. |

| − | + | Queue status can be seen at http://qstat.bx.psu.edu | |

| − | + | ||

| − | + | Physical specifications can be seen in the Ganglia Physical View for Persephone: http://ganglia.bx.psu.edu/?p=2&c=persephone | |

| − | + | ||

| − | + | There is one global all.q, with appropriate access restrictions to limit jobs to the appropriate nodes. It is absolutely essential that you specify your job's exact requirements when submitting with qsub (memory, # of cpus, etc). c1 and c2 are in a special 454pipeline.q, used for off-rig analysis of 454 Titanium runs, which only the FLX sequencer users can submit to (schuster-flx[1234]). | |

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

== s1 (s1.persephone.bx.psu.edu and s1.linne.bx.psu.edu) == | == s1 (s1.persephone.bx.psu.edu and s1.linne.bx.psu.edu) == | ||

| Line 43: | Line 36: | ||

* 16GB Ram | * 16GB Ram | ||

* 3TB /scratch | * 3TB /scratch | ||

| − | * CentOS 5. | + | * CentOS 5.5 |

Revision as of 16:55, 4 October 2010

Contents

Schuster Lab Computing Resources

linne cluster

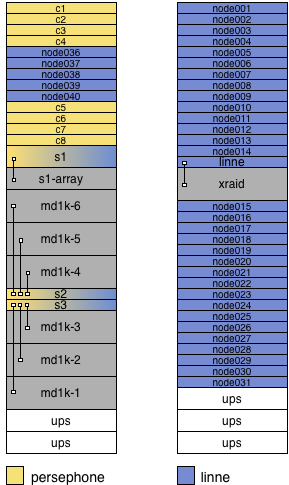

The linne cluster runs SGE 6.0u6 and can be used by anyone to submit arbitrary jobs. In addition, the linne cluster runs BioTeam iNquiry, which can be accessed here.

- head node: linne.bx.psu.edu

- Apple Xserve G5

- Two 2.3 GHz PowerPC G5 processors

- 6GB RAM

- 75GB /scratch

- MacOS X 10.4

- 31 compute nodes: node001.linne.bx.psu.edu --> node031.linne.bx.psu.edu

- Apple Xserve G5

- Two 2.3 GHz PowerPC G5 processors

- 2GB RAM

- 75GB /scratch

- MacOS X 10.4

- 5 compute nodes: node036.linne.bx.psu.edu --> node040.linne.bx.psu.edu

- Dell PowerEdge 1950

- Two quad-core 2.83 GHz Xeon E5440 processors (a total of 8 cores)

- 16GB RAM

- 100GB /scratch

- Red Hat Enterprise Linux 4.5

persephone cluster

The persephone cluster is a member of the central BX SGE installation. Queue status can be seen at http://qstat.bx.psu.edu

Physical specifications can be seen in the Ganglia Physical View for Persephone: http://ganglia.bx.psu.edu/?p=2&c=persephone

There is one global all.q, with appropriate access restrictions to limit jobs to the appropriate nodes. It is absolutely essential that you specify your job's exact requirements when submitting with qsub (memory, # of cpus, etc). c1 and c2 are in a special 454pipeline.q, used for off-rig analysis of 454 Titanium runs, which only the FLX sequencer users can submit to (schuster-flx[1234]).

s1 (s1.persephone.bx.psu.edu and s1.linne.bx.psu.edu)

s1 is dual-homed, it is connected to both the persephone and linne network switches. s1 can be used by anyone to run arbitrary jobs. It is especially useful for jobs that need a lot of local disk space.

- HP ProLiant DL380 (G5)

- Two quad-core 3.0 GHz Xeon X5450 processors (a total of 8 cores)

- 16GB Ram

- 3TB /scratch

- CentOS 5.5